Waymo introduced a generative world model for autonomous driving simulation, using camera and lidar outputs to test rare, dangerous, and counterfactual road situations before cars meet them in the real world.

Overview

Waymo's world model adapts Google DeepMind's Genie 3 for autonomous driving simulation. Instead of relying only on road miles, the system can generate realistic virtual scenarios that stress-test the Waymo Driver.

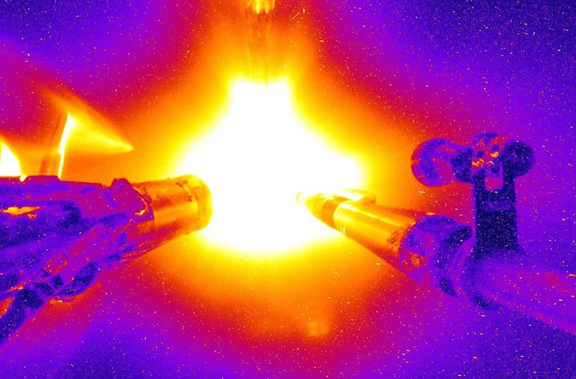

The model produces multimodal driving outputs, including camera and lidar-like data. That matters because autonomous vehicles do not see the road as a single video stream; perception, planning, and safety testing depend on multiple sensor channels.

Waymo highlights rare edge cases such as severe weather, unusual obstacles, and counterfactual route choices. Those are exactly the kinds of situations that are hard, slow, or unsafe to collect at scale with real cars.

The breakthrough is not that simulation replaces public-road validation. It is that learned simulation can expand the test universe, helping engineers discover failures before passengers or pedestrians ever encounter them.

Why It Matters

- Generates realistic driving simulations from a learned world model.

- Supports camera and lidar-style outputs for multimodal autonomous-vehicle testing.

- Lets engineers probe rare events, extreme weather, and counterfactual driving decisions.

- Shows how world models can become safety infrastructure, not just flashy AI demos.